Contemplating a Switch to 400 VDC for Data Centers

Direct current saves time, money, energy, space, and the environment. All we need to get there is new standards.

Jonas Bachmann, SCHURTER R&D Engineer for Appliance Couplers

Converting, transforming, converting, transforming — vast amounts of unused electricity simply disappear in data centers. Switching the power supply to direct current could bypass a large proportion of these losses, but would require a paradigm shift. It’s a worthwhile shift to make, though. Saving energy is not just good for the health of our environment; it’s good for business. Shifting to a conservation mindset would lead to a lean power system architecture that, through skillful planning, can save costs, time, resources, and effort.

The fact that we are almost always talking about alternating current when it comes to primary voltage electricity doesn’t mean it will remain that way. During the “War of the Currents” at the end of the 19th century, two opposing camps fought to win a standard, marking the first format war in industrial history. Nikola Tesla and George Westinghouse advocated for alternating current (AC), while Thomas Alva Edison made a strong case for direct current (DC). We know how the story ends, but the outcome was not so clear at the time.

Edison’s defeat did not spell the end for DC. Many DC-operated devices are still in use today, including entertainment electronics, industrial IT, communication technologies, electric vehicles, and other standbys of the digital age. At the other end of the energy supply chain, technologies are quickly evolving to mimic the AC primary power chain. Some of these generate DC, such as photovoltaics, fuel cells, and wind farms. Even in the transmission of power, there is an important exception to the otherwise prevailing AC: high-voltage direct current (HVDC) transmission systems, which enable the low-loss bulk transmission of electrical power over long distances.

Thus, the use of direct current is once again on the rise. More and more electricity is supplied along the supply chain in DC form at least once in energy generation, transmission, storage, and use applications. Although conversions are sometimes necessary to step down voltage for technical reasons, these AC voltages and frequencies are, at least at times, still used due to predetermined infrastructure built upon years of AC power standardization. However, these conversions always cause power losses, and thus waste energy as well as generate unnecessary heat.

According to an independent British report from 2016, data centers consume approximately 3% of the world’s electricity and account for 2% of total greenhouse gas emissions. This ecological footprint corresponds to that generated by the airline industry, which is often vilified for similar numbers. In reality, data centers used significantly more power than the entire UK in the last few years, with a consumption of 416.2 terawatt hours compared to about 300 terawatt hours for all of England, Scotland, Wales, and Northern Ireland, or roughly 65.65 million people.

Data centers are real energy guzzlers. A power, usage, and effectiveness, or PUE, value is usually used to evaluate data center efficiency. PUE values compare the total energy consumed in a data center with the energy consumed by computers. For example, a PUE value of 1.3 means that 30% of the energy dissipates as heat. This may sound like a lot, but this value is, in fact, excellent. Values of around 2 or higher are more the rule than the exception, and are much less desirable.

Why Do Energy Losses Occur?

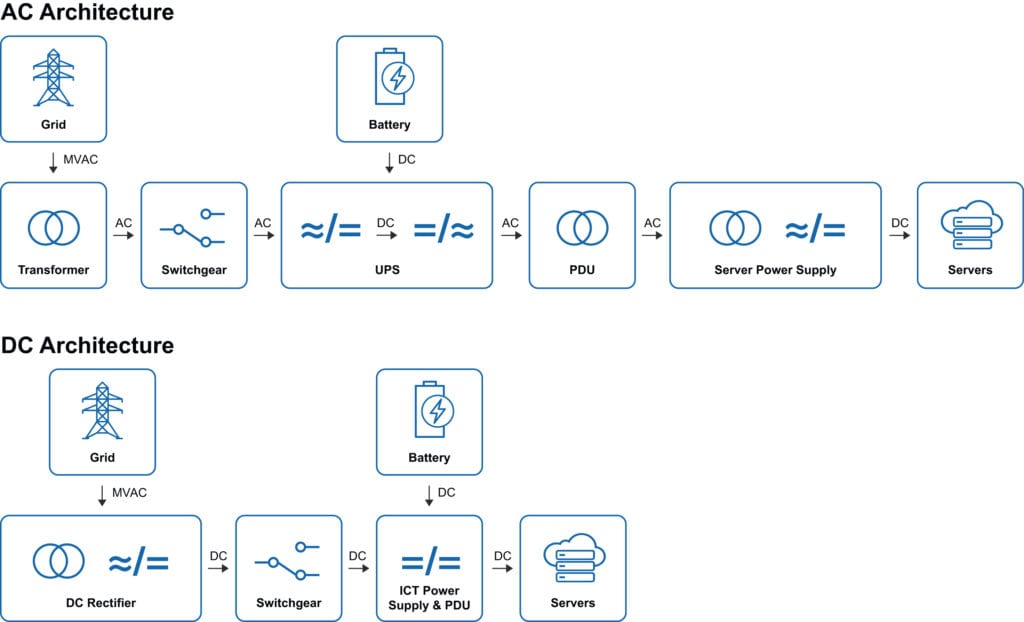

Losses occur all throughout data center systems. Energy is lost in processors, in cooling, in air conditioning, and also in the distribution of power. Traditional data centers draw their energy from a medium voltage line (AC). This alternating voltage is first transformed down and converted to DC in order to feed the batteries in the uninterruptible power supplies (UPSs). After a further conversion back into AC, it continues to the power distribution units (PDUs). These feed the individual power supplies of the end users (servers) with alternating current, which, in turn, has to be converted once again, since servers work off of direct current.

During each conversion or transformation from AC to DC and back again, energy always dissipates in the form of heat loss, and it only gets worse because this heat loss must be cooled and discharged, which requires additional components, which generate more heat.

The approach of providing a data center with DC voltage is obvious. If a server is already working off DC power, it makes sense to continue using it as consistently as possible throughout the chain, from the grid to the chip. The incoming medium voltage (AC) is transformed down and then converted over a high-power rectifier to DC. This supplies the batteries for UPSs and then transfers the power to DC switchgear for further distribution. The last step in the DC power supply brings the final level of supply voltage for the server to the usual computer as DC supply voltages.

DC power architecture contains significantly fewer components than AC power architecture. According to calculations and studies by ABB, Amstein+ Walthert, and Stulz, eliminating various transformations and conversions yields a 10% increase in efficiency from the supply to the server. In terms of investment costs for the electrical infrastructure, one should work off the basis of a reduction of around 15%. Considerably less space is also required for DC electrical infrastructure, so a 25% reduction should be expected in this case.

Systems based on fewer components are faster to install, cause fewer errors, and are easier to service. This makes them more reliable, and therefore cheaper, in terms of both purchase and maintenance costs. According to a study conducted by Nippon Telegraph and Telephone (NTT), overall system reliability is expected to increase tenfold due to DC systems being significantly less complex.

DC also improves the quality of the power supply by eliminating problems with unwanted harmonic waves and harmonic distortions and doesn’t require phase compensation or synchronization for coupling various sources and networks. Even rectifiers and inverters are not required, as the batteries in these systems are connected directly to the DC supply.

No sane person would think of building a data center in the middle of a big city. The high price of the land alone makes such a choice impossible. So, it’s out to the countryside we go, and there we can find some interesting additional perspectives. It is so much easier to integrate renewable energy sources like photovoltaics, fuel cells, or wind energy in wide-open spaces, and these sources of energy already provide electricity as direct current.

The Quest for Standards

Data centers around the world already use DC technology, including ones in China, Japan, Germany, Switzerland, and the US. However, there are no binding standards for its use. The International Electrotechnical Commission (IEC) has set out to create the missing link with standardized plug and socket devices according to TS 62735. The standard IEC TS 62735-1 has been adopted since August 2015 for systems up to 2.6kW relating to the distribution of power. In December 2016, the standard IEC TS 62735-2 was approved for higher outputs of up to 5.2kW, which can no longer be separated when under load.

The device-side equivalent should also be approved in the next step. Efforts are currently being made to create solutions for DC plug connections on the previous AC standard IEC 60320. So far, there are different approaches for DC connectors, but they have not been able to prevail due to the pending standards. As such, various providers are working together in the IEC standardization body in order to replace the current proprietary approaches with an internationally recognized standard.

However, the conversion of the voltage supply must be gradual to avoid switching all of the devices from an AC to a DC supply all at once. To this end, solutions that can feed the device with both an AC and a DC supply are actively being sought. The power supply units of the devices can already process both supply voltages, but all of the appropriate safety precautions must be taken.

Where there is light, there are also shadows. This old adage also applies to 400VDC data centers. It must be noted that there is a lack of long-term experience values. The skills and knowledge enabling this technology need to be collected and honed in the coming years.

The availability of DC components is still in its infancy. It needs a new approach. The use of a DC supply requires integral planning from the grid to the chip and everything in between.

Since losses still exist in this system — for example, heat loss — cooling systems with a DC supply are a requirement. Air conditioning systems, fire protection systems, access control systems, and building control systems are also needed, and should be equipped for DC operation.

This switch will also require a great deal of cooperation. Cooperation is the fastest and most effective way to achieve defined and established standards, and everyone, right on down to data center operators, benefits from the potential rewards of cooperation.

A Better Source of Power

Data centers that use direct rather than alternating current have enormous potential. Not only does this shift offer the potential to save energy, it also, and to the same degree, yields savings on costs, space, resources, and time. In some situations, the supply of renewable energy sources offers the possibility of providing DC electricity directly to data centers, without the need for additional transformation or conversion processes. In addition to cost reductions, another factor must be clearly emphasized and should be given at least equal weight: The quality of DC-level power is quantifiably better. As such, switching to 400VDC data centers, governed by internationally recognized standards, will result in the use of fewer components and, ultimately, greater reliability.

Recently posted:

[related_posts limit=”10″]