Top 12 Technology Trends: The Evolution of High-Speed Data Transfer

The connector industry continues to refine backplane and I/O connectors to provide a migration path to the next generation of high-speed architectures.

This is the fourth in a series of articles that review leading technology trends that have had a significant impact on the electronic connector industry. high-speed 112Gb/s

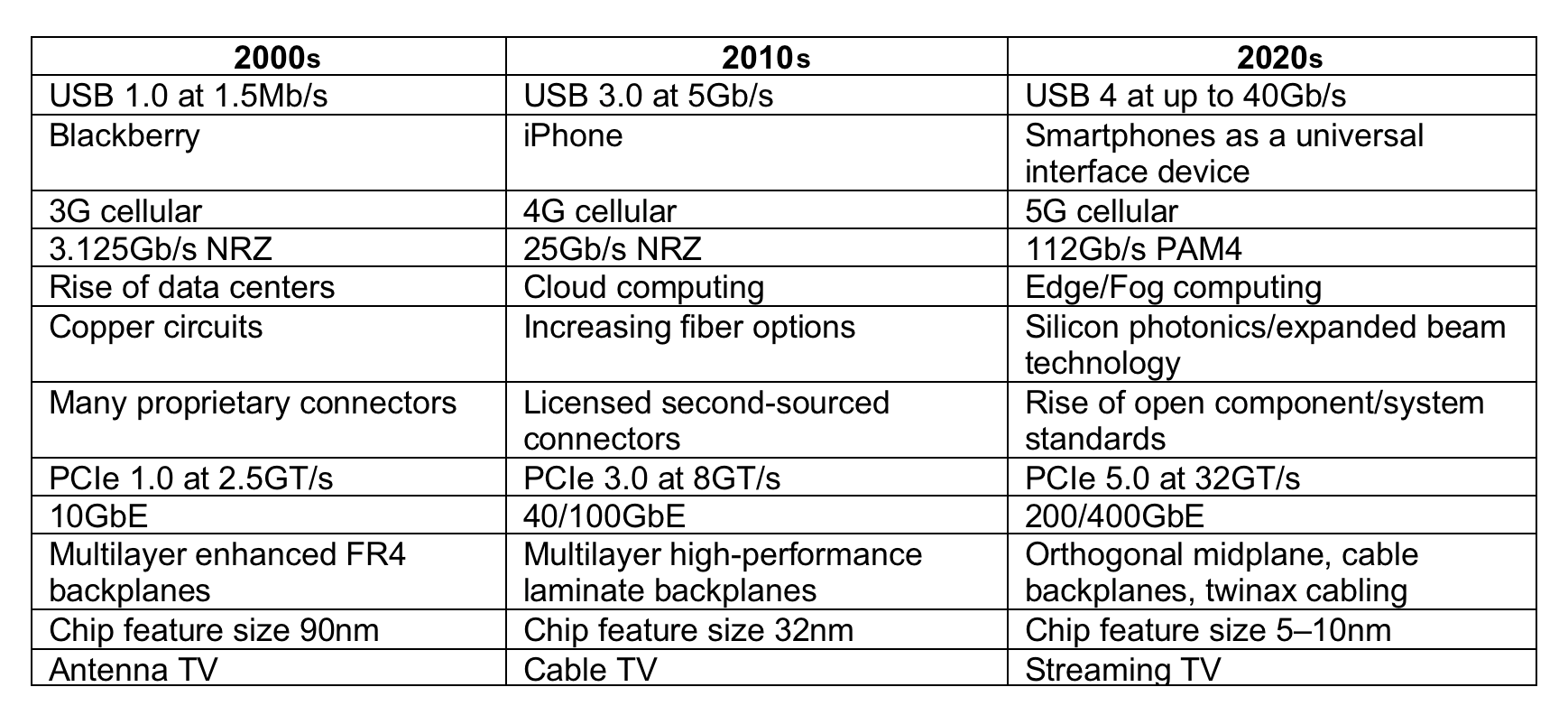

3.125Gb/s NRZ to 112Gb/s PAM4

It has always been about speed. From the earliest days of electronic computing and communications, engineers have continually pushed the bounds of established technology to increase the bandwidth of the device. Faster data transfer enables a computer to push ones and zeros more efficiently to solve complex problems in less time. Communication systems that transfer data more quickly can increase capacity. Few system designers have ever been satisfied with what a current product features in terms of speed.

Performance of electronic devices is often rated in terms of the number of bits transferred per second. Kilobits per second became megabits per second as engineers found ways to switch voltage levels quicker.

“High-speed” is a relative term that has constantly evolved from a few hundred bits per second to single channels that feature transfer rates of up to 112Gb/s. Simple DC connections became transmission lines where impedance control was essential. As the difference between the signal levels of a one and a zero became smaller, induced noise threatened to corrupt the data. The industry moved from single-ended signaling to differential signaling where the difference in voltage levels between two conductors cancelled external interference. As speeds increased further, shielded twisted-pair cable became the preferred media. Traditional connectors designed around a standard grid were modified by coupling signal lines in close pairs and adding a shield.

“High-speed” is a relative term that has constantly evolved from a few hundred bits per second to single channels that feature transfer rates of up to 112Gb/s. Simple DC connections became transmission lines where impedance control was essential. As the difference between the signal levels of a one and a zero became smaller, induced noise threatened to corrupt the data. The industry moved from single-ended signaling to differential signaling where the difference in voltage levels between two conductors cancelled external interference. As speeds increased further, shielded twisted-pair cable became the preferred media. Traditional connectors designed around a standard grid were modified by coupling signal lines in close pairs and adding a shield.

Immense strides in signal conditioning features at the chip level — including compensation, equalization, and forward error correction — enabled engineers to reliably detect high-speed signals in longer channels. These tools are used to compensate for the negative effects of crosstalk, reflections, jitter, skew, and simple attenuation. Advanced channel performance measurement tools and protocols were introduced, including eye diagrams, scattering parameters (S-parameters), bit error rates, and channel operating margin, to quantify the ability of a system to perform to specification. Signal integrity engineers became highly sought-after.

Immense strides in signal conditioning features at the chip level — including compensation, equalization, and forward error correction — enabled engineers to reliably detect high-speed signals in longer channels. These tools are used to compensate for the negative effects of crosstalk, reflections, jitter, skew, and simple attenuation. Advanced channel performance measurement tools and protocols were introduced, including eye diagrams, scattering parameters (S-parameters), bit error rates, and channel operating margin, to quantify the ability of a system to perform to specification. Signal integrity engineers became highly sought-after.

The 10 Gigabit Attachment Unit Interface (XAUI) defined by IEEE 802.3ae pushed the boundary of speed to deliver 10Gb/s over four differential pairs running at 3.125Gb/s each. The connector industry continued to refine backplane and I/O connectors to provide a migration path to ever higher speeds. PCB material was enhanced with better loss characteristics. The transition between a connector and the PCB was identified as a significant source of noise, so the size of compliant pins were reduced to minimize the diameter of the plated through hole. Back drilling the hole reduced reflections. Connector manufacturers published reference designs for the connector launch footprint. Every millimeter of circuit path length through the connector was optimized to minimize loss and distortion.

The result was a race to ever higher connector performance ratings. By 2010, manufacturers were promoting connectors designed to perform at speeds of up to 25Gb/s. At the time, few applications required that level of performance, but the ability to offer connectors that could satisfy system needs of today, as well as several generations in the future, was an attractive proposition.

Over the last 10 years, demands for higher speeds began to bump up against the limits of non-return-to-zero (NRZ) signaling. Pulse-amplitude modulation (PAM4) signaling came to the rescue.

Over the last 10 years, demands for higher speeds began to bump up against the limits of non-return-to-zero (NRZ) signaling. Pulse-amplitude modulation (PAM4) signaling came to the rescue.

Rather than transmitting one bit per cycle, PAM4 transmits two bits, effectively doubling the bandwidth. This enables a designer to continue using established design rules and components while greatly increasing the bit rate. When evaluating high-speed connector performance, it has become important to determine if the ratings are based on NRZ or PAM4 signaling.

Demand for ever higher bandwidth is being driven by new applications, including high-performance computing, artificial intelligence, cloud computing, virtual and augmented reality, consumer-generated video, and the Internet of Things (IoT).

Telecom networks and data centers are responding to dramatically increasing traffic flow by upgrading server performance. Faster channels can address I/O panel congestion, but this can also raise thermal management issues. As edge computing increases in popularity, aggregation at the core will demand accelerated processing speeds.

Emerging standards such as 400GbE provide an evolutionary path to supporting higher bandwidth.

Coherent optical transponder modules can deliver up to three 400GbE client signals over a single 1.2Tb/s channel, allowing network operators a smooth transition path from current 100Gb/s to 400Gb/s.

Two years ago, engineers debated the feasibility of 112Gb/s copper channels. Recent industry trade shows featured multiple demonstrations of channels running 112Gb/s using PAM4 technology. How we get to 200+Gb/s channels may involve PAM8 signaling or some yet to be developed breakthrough. Few engineers are willing to define the absolute limit of their ability to creatively push technology to the next level.

Read the third installment in Bob Hult’s Technology Trends series.

Like this article? Check out our other High-Speed, PAM4, signal, Connection Basics, articles, our Datacom/Telecom Market Page, and our 2020 and 2019 Article Archives.

- Optics Outpace Copper at OFC 2024 - April 16, 2024

- Digital Lighting Enhances your Theatrical Experience - March 5, 2024

- DesignCon 2024 in Review - February 13, 2024